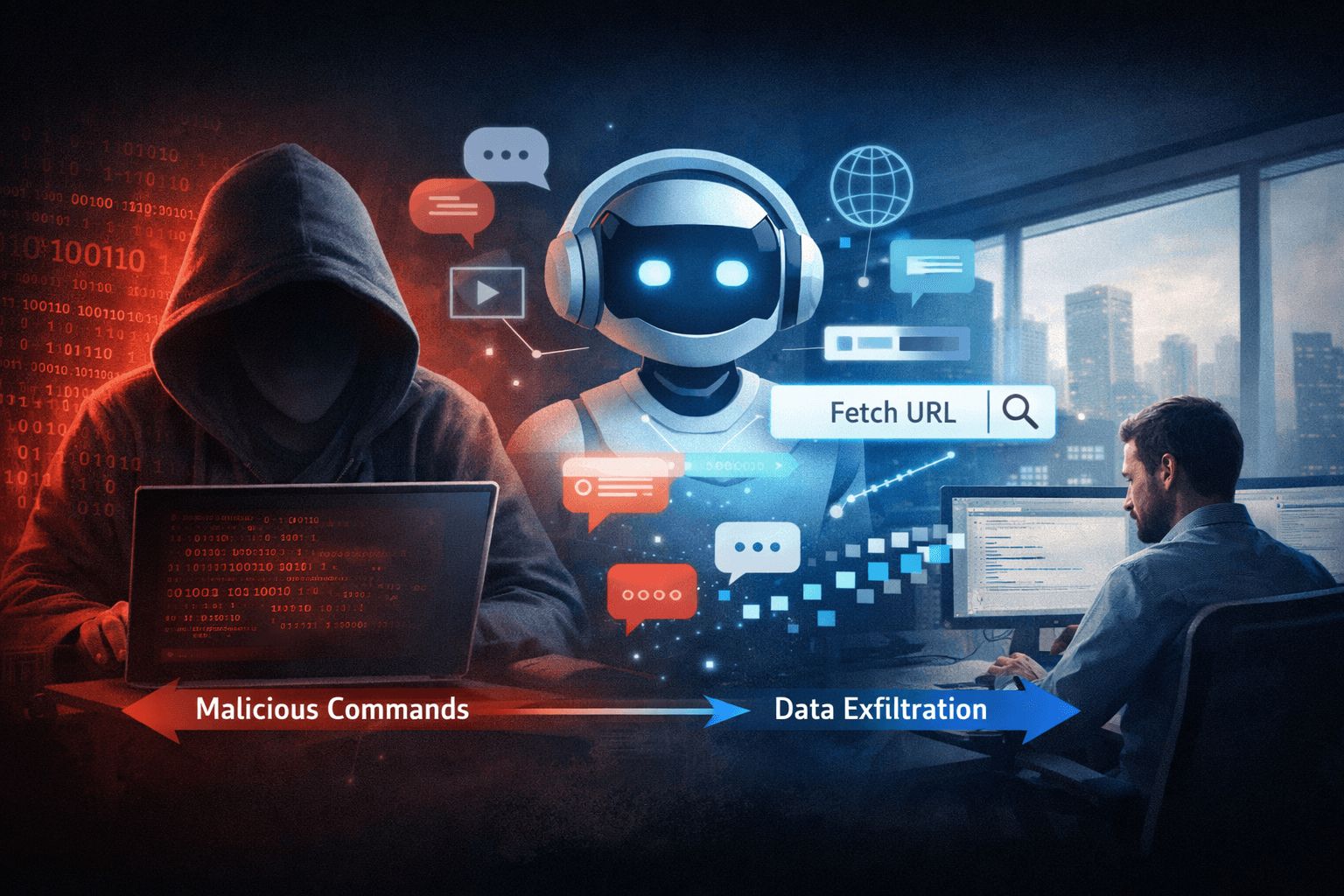

A new class of post-compromise technique is forcing enterprise security teams to rethink their threat models. Researchers at Check Point demonstrated in 2024 that AI assistants — including Microsoft Copilot and xAI Grok — can be weaponized as command-and-control (C2) proxies, enabling attackers to relay malicious instructions through tools your organization already trusts. No API keys required. No suspicious binaries. Just natural language queries blending invisibly into everyday enterprise traffic.

This technique represents a significant evolution beyond traditional C2 infrastructure and living-off-the-land binaries (LOLBins). When malware can instruct a compromised host to ask an AI assistant to "fetch this URL and tell me what it says," the resulting traffic looks indistinguishable from a developer researching documentation. Security teams face a detection gap that conventional tools were never designed to close. This article breaks down how AI-assisted C2 works, why it evades detection, and what your organization can do about it now.

How Attackers Abuse AI Assistants for Command-and-Control

Traditional C2 infrastructure leaves fingerprints — domain generation algorithms, hardcoded IPs, unusual outbound ports. AI-assisted C2 leaves almost none of these. Understanding the mechanics is the first step toward building a defense.

The Core Technique: Prompt-Driven Relay

Once an attacker gains an initial foothold on a compromised host, malware instructs the system to submit crafted prompts to an AI assistant with web browsing or URL-fetching capabilities. The AI retrieves attacker-controlled content from a remote URL, then returns the parsed response to the malware. That response may contain encoded commands, updated payloads, or exfiltration instructions — all delivered through the AI's legitimate HTTPS channel.

The attack flow follows a consistent pattern:

- Malware executes on a compromised endpoint with access to an AI assistant

- A crafted natural language prompt requests the AI fetch a specific attacker-controlled URL

- The AI retrieves and processes the remote content, returning a structured response

- Malware parses the response and executes embedded commands

- Data exfiltration occurs through subsequent AI queries, encoding output in prompt text

Why No API Keys Are Needed

This technique exploits the natural language interface of AI assistants directly — through browser sessions, desktop clients, or enterprise integrations already authenticated by the victim's credentials. The attacker never touches the AI provider's API directly. From the AI provider's perspective, every interaction looks like a legitimate authenticated user making a reasonable request.

Pro Tip: This means your existing API monitoring and key management controls offer zero protection against this technique. Detection must happen at the endpoint and network behavior layer, not the API layer.

Why This Technique Evades Traditional Detection

Understanding the detection gap is critical before organizations can design effective mitigations. AI-assisted C2 defeats several layers of conventional security simultaneously.

Traffic Blends with Legitimate Enterprise Use

Microsoft Copilot and xAI Grok are increasingly common in enterprise environments. Security information and event management (SIEM) rules tuned to flag unusual outbound connections will not trigger on traffic destined for copilot.microsoft.com or grok.x.ai. These are trusted, certificate-validated endpoints your firewall is almost certainly configured to allow.

Table: AI-Assisted C2 vs. Traditional C2 Detection Characteristics

| Characteristic | Traditional C2 | AI-Assisted C2 |

|---|---|---|

| Network destination | Unknown/suspicious domains | Trusted AI service endpoints |

| Protocol | Often custom or obfuscated | Standard HTTPS |

| API key required | Yes (custom infra) | No |

| Traffic volume | Anomalous spikes common | Matches normal user behavior |

| Payload encoding | Binary or encoded blobs | Natural language text |

| Detection by DLP | Moderate | Very difficult |

Adaptive Commands Defeat Static Signatures

Static C2 infrastructure serves pre-written payloads. AI-assisted C2 allows attackers to modify instructions in real time by simply updating the content at the fetched URL. An implant that retrieved commands yesterday may receive entirely different instructions today — with no change to the malware binary itself. This adaptability puts the technique beyond the reach of signature-based detection and makes behavioral baselines harder to establish.

Mimicry of Normal Query Patterns

Security teams monitoring for anomalous user behavior face a compounding challenge. A user asking Copilot ten questions per hour looks identical to malware submitting ten crafted prompts per hour. Without semantic analysis of prompt content at scale, distinguishing the two is extremely difficult in practice.

The Threat Landscape: How This Fits Post-Compromise Operations

AI-assisted C2 is not an initial access technique — it is a post-compromise capability. Contextualizing it within the MITRE ATT&CK framework helps security teams understand where to focus defensive investment.

Mapping to MITRE ATT&CK

This technique maps most closely to several ATT&CK tactics:

- Command and Control (TA0011): Using AI assistants as proxy channels for C2 communication

- Exfiltration (TA0010): Encoding sensitive data within natural language prompts to AI tools

- Defense Evasion (TA0005): Blending malicious traffic with legitimate enterprise AI usage

Table: MITRE ATT&CK Technique Mapping for AI-Assisted C2

| ATT&CK Tactic | Technique ID | AI C2 Behavior |

|---|---|---|

| Command and Control | T1071.001 | HTTPS to trusted AI endpoints |

| Defense Evasion | T1036 | Masquerading as legitimate user traffic |

| Exfiltration | T1048.003 | Data encoded in outbound AI prompts |

| Execution | T1059 | AI responses parsed and executed locally |

Evolution Beyond LOLBins

Living-off-the-land techniques abuse legitimate system binaries — PowerShell, certutil, mshta — to execute attacker commands without dropping custom malware. AI-assisted C2 extends this philosophy to cloud-hosted AI services, dramatically expanding the attack surface while reducing the attacker's infrastructure footprint. Organizations that have invested heavily in LOLBin detection now face a parallel evasion class that their existing rules were not designed to catch.

Mitigations: Defending AI-Integrated Environments

The good news is that practical mitigations exist, though they require deliberate implementation. The following controls address the core exposure points this technique exploits.

Restrict AI Assistant Web Browsing Capabilities

The most direct mitigation is disabling or restricting the URL-fetching and web browsing features of AI assistants deployed in your environment. Microsoft Copilot, for example, offers administrative controls to limit browsing scope. Apply the principle of least privilege to AI tool capabilities just as you would to user accounts.

Key configuration controls to evaluate:

- Disable AI assistant web browsing where not operationally required

- Restrict AI integrations to internal data sources only where possible

- Apply conditional access policies to AI tool authentication

- Enforce data loss prevention (DLP) policies on AI assistant interactions

Monitor for Prompt Anomalies and Behavioral Indicators

Organizations should establish baseline behavior for AI assistant usage across their environment, then monitor for deviations. Indicators worth investigating include:

- AI assistant queries originating from non-interactive processes or service accounts

- High-frequency, repetitive prompt patterns inconsistent with human typing

- Prompts containing encoded strings, URL patterns, or unusual formatting

- AI tool usage from hosts where no human user is actively logged in

Important: Prompt-level monitoring requires integration with AI provider logging APIs or endpoint-based monitoring tools. Review what telemetry your AI platforms expose and configure it before you need it.

Apply Zero Trust Principles to AI Tool Access

Zero Trust Network Access (ZTNA) architecture assumes breach and verifies every access request. Apply this model to AI assistant access by requiring device compliance checks, limiting which endpoints can reach AI services, and segmenting AI tool access away from high-value systems. Even if malware executes on a compromised host, network segmentation can prevent it from reaching the AI endpoints necessary to complete the C2 relay.

Table: Mitigation Controls by Implementation Priority

| Control | Priority | Complexity | Effectiveness |

|---|---|---|---|

| Disable AI web browsing | High | Low | High |

| Prompt anomaly monitoring | High | Medium | Medium |

| Network segmentation for AI access | High | Medium | High |

| DLP on AI interactions | Medium | Medium | Medium |

| AI usage baseline + alerting | Medium | High | Medium |

| Endpoint behavioral analysis | Medium | High | High |

Key Takeaways

- Disable AI assistant web browsing in enterprise environments unless there is a clear operational requirement — this is the single most effective mitigation available today

- Map AI-assisted C2 into your threat model using MITRE ATT&CK TA0011 and TA0005 to ensure detection coverage addresses this evasion class

- Establish AI usage baselines across your environment so anomalous prompt frequency or non-human usage patterns trigger investigation

- Apply least privilege to AI tool capabilities, treating AI assistant permissions with the same rigor you apply to user and service account access

- Enable all available logging from AI platforms in use and integrate that telemetry into your SIEM for correlation with endpoint and network data

- Brief your threat intelligence and red teams on this technique so purple team exercises can validate detection coverage before attackers exploit the gap

Conclusion

AI-assisted C2 is not a theoretical future threat — it is a demonstrated technique, validated by Check Point researchers against production AI tools that millions of enterprises use daily. The same capabilities that make AI assistants productive make them exploitable: trusted network paths, natural language interfaces, and web access with no friction. Organizations that treat AI tool governance as a productivity question rather than a security question are accepting risk they may not yet have quantified.

Closing this gap requires action at the administrative, architectural, and detection layers. Restricting AI browsing capabilities, establishing usage baselines, and integrating AI platform telemetry into existing security operations are achievable steps your team can begin evaluating now. Incorporate AI-assisted C2 into your threat modeling sessions and ensure your next purple team exercise tests for it explicitly.

Frequently Asked Questions

Q: Does this attack technique require the attacker to compromise the AI provider itself? A: No — the attacker never needs to access or compromise Microsoft, xAI, or any AI provider. The technique exploits the AI assistant's legitimate web browsing capability from within the victim's already-compromised environment, using the victim's own authenticated session.

Q: Which AI assistants are vulnerable to being used this way? A: Any AI assistant with the ability to fetch external URLs or browse the web is potentially exploitable as a C2 relay. Check Point demonstrated the technique specifically against Microsoft Copilot and xAI Grok, but the underlying method applies broadly to AI tools with similar capabilities.

Q: Will disabling AI web browsing fully eliminate this risk? A: Disabling web browsing significantly reduces the risk by removing the relay mechanism, but organizations should still monitor AI usage for anomalous patterns. Attackers may find alternative methods as AI tool capabilities evolve, so defense in depth remains essential.

Q: How does this technique differ from traditional living-off-the-land (LOLBin) attacks? A: LOLBin attacks abuse built-in OS binaries like PowerShell or certutil to execute commands locally. AI-assisted C2 extends this concept to cloud-hosted AI services, using them as trusted communication proxies. This dramatically reduces the attacker's infrastructure footprint and leverages a detection blind spot that most LOLBin defenses do not cover.

Q: What compliance frameworks require organizations to address this type of threat? A: Several frameworks have relevant requirements. NIST CSF 2.0 addresses supply chain and third-party risk controls applicable to AI tools. ISO 27001 requires organizations to assess and treat risks from external services. CIS Controls v8 specifically addresses secure configuration and monitoring of enterprise software, which extends to AI assistant deployments. Organizations subject to HIPAA, PCI DSS, or SOC 2 should evaluate whether AI tool usage introduces reportable data handling risks.

Enjoyed this article?

Subscribe for more cybersecurity insights.