Artificial intelligence infrastructure is expanding faster than the security controls designed to protect it. A single misconfigured inference API or unprotected model management interface can hand an attacker the keys to your entire AI ecosystem — including the databases, services, and enterprise data your large language model (LLM) is connected to. Security researchers increasingly flag LLM endpoints as one of the most underestimated attack surfaces in modern enterprise environments.

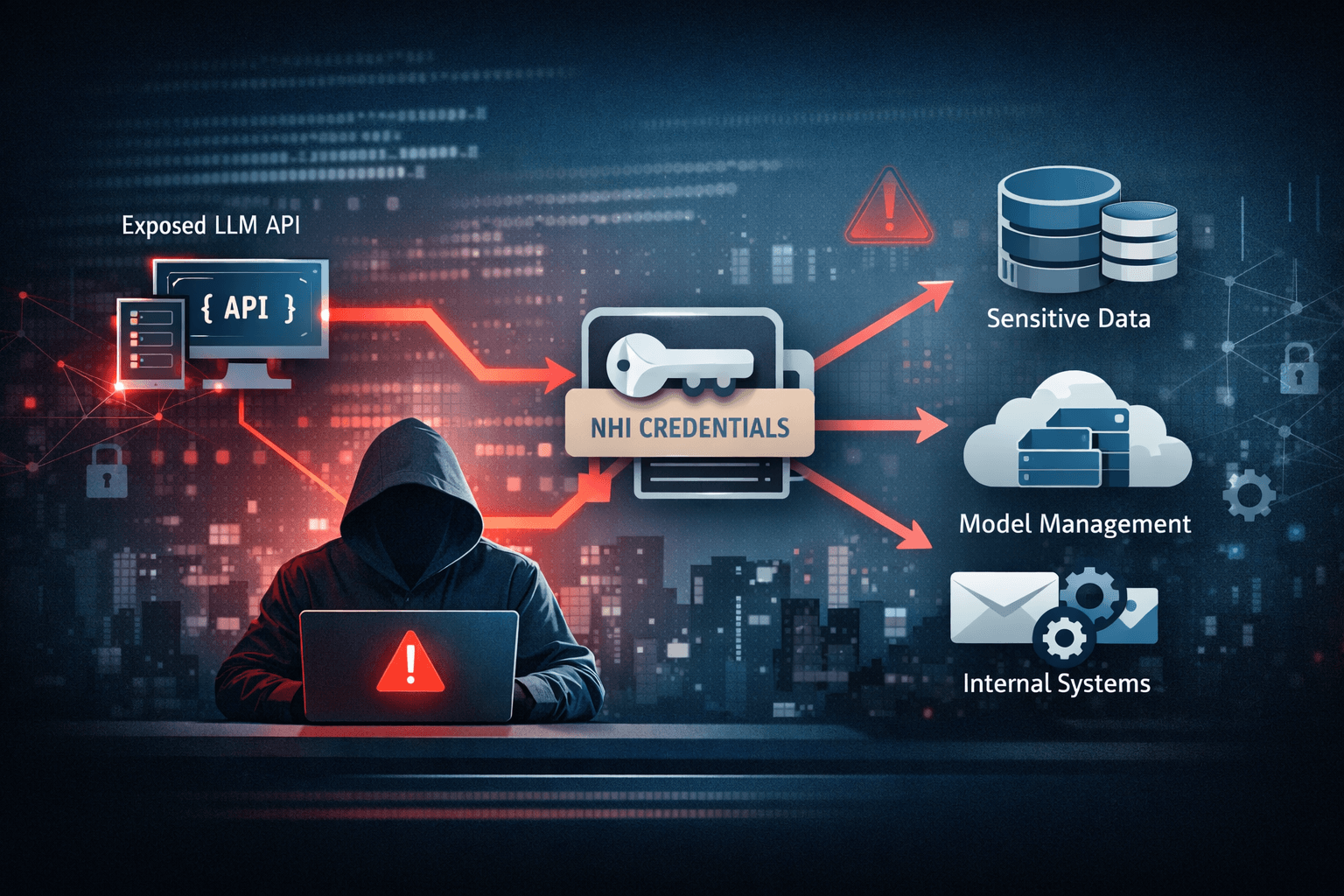

The problem is not theoretical. As organizations race to integrate LLMs into production workflows, exposed endpoints are multiplying alongside them. Excessive permissions, static credentials, and implicit trust relationships between AI components create conditions where a single compromised endpoint can trigger cascading access across interconnected systems. Non-Human Identities (NHIs) — the service accounts and API tokens that LLMs use to interact with other systems — are emerging as a particularly dangerous weak point.

This article examines how exposed LLM endpoints expand your attack surface, why NHIs amplify the risk, and what security teams must do to regain control before attackers do.

The LLM Attack Surface: Understanding Exposed Endpoint Paths

LLM infrastructure introduces a unique set of exposure paths that differ meaningfully from traditional web application risk. The combination of broad system access, implicit trust, and high data sensitivity makes misconfigured endpoints exceptionally dangerous.

Public APIs Without Authentication

Inference APIs — the interfaces through which applications send prompts and receive model outputs — are frequently exposed to the internet with inadequate or absent authentication controls. In many observed deployments, these APIs rely on weak static tokens embedded in application code, or in some cases, no authentication at all. An attacker who discovers an exposed inference endpoint gains the ability to query the model directly, probe its system prompt, and potentially extract sensitive context loaded into the model's memory at runtime.

The risk compounds when you consider that LLMs are routinely configured with access to internal databases, document stores, and enterprise services. What begins as an exposed API query can quickly become unauthorized data retrieval from systems the attacker would never otherwise reach.

Cloud Misconfigurations and Model Management Interfaces

Model management interfaces — used for fine-tuning, monitoring, and updating deployed models — represent another high-value exposure path. These interfaces are frequently misconfigured in cloud environments, left accessible via overly permissive security group rules or identity and access management (IAM) policies that grant far more access than the role requires. According to cloud security research (Industry Reports, 2024), misconfigured cloud resources remain the leading cause of data breaches in cloud-native environments, and AI infrastructure is not immune to this pattern.

Important: Model management interfaces often carry administrative-level access to your AI deployment pipeline. Treat them with the same access controls you apply to production database consoles.

Table: Common LLM Endpoint Exposure Paths

| Exposure Path | Root Cause | Attacker Capability | Risk Level |

|---|---|---|---|

| Public inference API | Missing or weak auth | Prompt injection, data exfiltration | Critical |

| Model management interface | Cloud misconfiguration | Pipeline manipulation, model poisoning | Critical |

| Embedded static API tokens | Secrets sprawl | Credential theft, lateral movement | High |

| Overpermissioned IAM roles | Least-privilege failure | Cross-service access abuse | High |

| Unmonitored tool integrations | Blind trust in LLM outputs | Indirect prompt injection | High |

Why NHIs Turn Exposed Endpoints Into Force Multipliers

Non-Human Identities are the invisible connective tissue of modern AI infrastructure. Every time your LLM queries a database, calls an external API, or writes to a storage bucket, it does so through an NHI — a service account, API key, or OAuth token. These identities are proliferating faster than most security teams can track, and they carry risks that traditional identity security programs were not designed to address.

Secrets Sprawl and Static Credentials

Unlike human users who authenticate interactively, NHIs typically rely on long-lived secrets — API keys and tokens that are generated once, embedded in configuration files or environment variables, and rarely if ever rotated. This secrets sprawl creates an enormous attack surface. A single compromised repository, misconfigured storage bucket, or leaked environment file can expose dozens of NHI credentials simultaneously, each granting a different level of access to connected systems.

MITRE ATT&CK documents credential access (TA0006) and lateral movement (TA0008) as primary post-compromise objectives, and stolen NHI credentials serve both. An attacker who obtains a service account token from an exposed LLM endpoint does not need to brute-force further access — the credential itself grants it.

Excessive Permissions and Rare Rotation

NHIs are routinely provisioned with far more access than their function requires. A service account created to allow an LLM to read from a document store may also have write permissions, deletion rights, or access to unrelated data repositories — because narrowing those permissions takes time that deployment teams rarely allocate. Combined with rotation cycles that may stretch to months or years, these over-permissioned identities become high-value, long-duration targets for attackers.

Pro Tip: Audit every NHI in your LLM infrastructure against the principle of least privilege. If a service account can do more than its documented function requires, it is over-permissioned by definition.

Table: NHI Risk Factors in LLM Environments

| Risk Factor | Description | Security Impact |

|---|---|---|

| Static long-lived credentials | Keys rarely rotated | Extended attacker dwell time |

| Excessive permissions | Broader access than function requires | Lateral movement enablement |

| Secrets sprawl | Credentials embedded across codebases | High exposure surface |

| No session monitoring | NHI activity not logged or reviewed | Blind spots for detection |

| Implicit trust in LLM outputs | Actions taken without validation | Tool abuse and data exfiltration |

How Attackers Exploit LLM Endpoints and NHI Credentials

Understanding the attacker's perspective is essential for building effective defenses. LLM endpoints are not just vulnerable — they are actively attractive targets because they sit at the intersection of data access, automation, and implicit organizational trust.

Prompt-Driven Exfiltration and Indirect Injection

When an LLM has access to internal data through retrieval-augmented generation (RAG) pipelines or connected tool integrations, an attacker who controls the model's input can manipulate its output to extract that data. This technique — known as prompt-driven exfiltration — requires no exploitation of a traditional vulnerability. The attacker simply crafts inputs that cause the model to retrieve and surface sensitive information it was never intended to share externally.

Indirect prompt injection extends this further. An attacker plants malicious instructions in content the LLM is likely to process — a document, a webpage, or an email — and those instructions redirect the model's behavior when it encounters them. In environments where LLMs have write access to systems or can trigger automated actions, indirect injection can initiate unauthorized transactions, data transfers, or configuration changes without any direct attacker interaction after the initial setup.

Lateral Movement via Compromised NHI Credentials

Once an attacker obtains an NHI credential from an exposed endpoint, the path to lateral movement is often unobstructed. The stolen token authenticates against connected services exactly as the legitimate LLM would, meaning network-level detection based on behavioral baselines may not flag the activity. Without robust session monitoring and anomaly detection tied specifically to NHI behavior, compromised credentials can enable prolonged unauthorized access across an organization's AI ecosystem and the enterprise systems it touches.

Table: LLM-Specific Attack Techniques Mapped to MITRE ATT&CK

| Attack Technique | MITRE Tactic | LLM-Specific Vector |

|---|---|---|

| Prompt-driven exfiltration | Exfiltration (TA0010) | RAG pipeline abuse |

| Indirect prompt injection | Initial Access (TA0001) | Malicious document processing |

| NHI credential theft | Credential Access (TA0006) | Exposed API token harvest |

| Lateral movement via token | Lateral Movement (TA0008) | Cross-service NHI abuse |

| Tool abuse | Execution (TA0002) | Unauthorized LLM-driven actions |

Securing Exposed LLM Endpoints: Mitigation Strategies That Work

Prevention alone is insufficient when dealing with LLM endpoint security. The dynamic, highly connected nature of AI infrastructure means that some level of exposure is often unavoidable during development and deployment. The goal is to minimize the blast radius of any compromise and eliminate the conditions that allow attackers to move freely once inside.

Least-Privilege Access and Just-in-Time Provisioning

Every NHI in your LLM infrastructure should be provisioned with the minimum permissions required for its specific function — and those permissions should be granted only for the duration they are needed. Just-in-Time (JIT) access models, where credentials are issued dynamically for defined tasks and expire automatically, dramatically reduce the window of opportunity for credential abuse. This approach aligns with CIS Controls v8 guidance on account management and significantly limits the utility of any stolen token.

Implementing least-privilege across LLM infrastructure requires a current inventory of all NHIs and their associated permissions. Many organizations lack this visibility, which is itself a critical gap that must be addressed before other controls can be effective.

Automated Secret Rotation and Zero-Trust Endpoint Architecture

Automating secret rotation eliminates the operational friction that leads to long-lived static credentials in the first place. Secrets management platforms integrated into your CI/CD pipeline can rotate NHI credentials on a schedule or after defined events — such as a detected anomaly or a code repository exposure — without requiring manual intervention. This directly addresses the extended dwell time that static credentials enable.

Adopting a zero-trust architecture for LLM endpoints means no connection to or from an inference API or model management interface is trusted by default, regardless of network location. Every session must be authenticated, authorized, and continuously validated. Combined with session monitoring that logs NHI activity at a granular level, this approach provides both a preventive control and the detection capability needed to identify compromised credentials before significant damage occurs. ISO 27001 and NIST SP 800-207 (Zero Trust Architecture) both provide applicable frameworks for structuring this implementation.

Key Takeaways

- Audit all LLM endpoints immediately — identify every inference API and model management interface exposed to the internet or accessible without strong authentication

- Inventory every NHI in your AI infrastructure, including service accounts, API tokens, and OAuth credentials, and map their permissions against actual functional requirements

- Implement JIT access for NHIs wherever possible to eliminate long-lived static credentials and reduce attacker dwell time after compromise

- Automate secret rotation on a defined schedule and integrate it into your CI/CD pipeline to remove manual rotation as a dependency

- Deploy session monitoring for all NHI activity to detect anomalous behavior patterns that indicate credential abuse or lateral movement

- Adopt zero-trust principles for LLM endpoint access — authenticate and validate every session regardless of origin or network position

Conclusion

Exposed LLM endpoints represent one of the fastest-growing and least-understood attack surfaces in enterprise security today. As organizations deepen their reliance on AI infrastructure, the combination of misconfigured APIs, implicit trust relationships, and over-permissioned NHIs creates conditions where a single exposure point can compromise far more than the AI system itself. Attackers are already aware of this — and they are actively exploiting it.

Security teams must move beyond perimeter-focused thinking and address the identity and access management challenges that LLM infrastructure introduces. Least-privilege provisioning, automated rotation, zero-trust endpoint controls, and continuous NHI monitoring are not optional enhancements — they are the baseline your AI security posture requires. Start with visibility, then build control.

Frequently Asked Questions

Q: What is a Non-Human Identity (NHI) and why does it matter for LLM security? A: A Non-Human Identity is any credential used by a system, service, or automated process rather than a human user — including API keys, service account tokens, and OAuth credentials. In LLM infrastructure, NHIs are how your AI model interacts with databases, APIs, and external services, making them high-value targets for attackers who want broad access without human authentication barriers.

Q: How does prompt injection relate to exposed LLM endpoints? A: Prompt injection exploits the fact that LLMs process instructions and data through the same input channel, making it possible for an attacker to craft inputs that redirect model behavior. When combined with an exposed endpoint, injection attacks can cause the model to exfiltrate internal data, bypass intended restrictions, or trigger unauthorized actions through connected tool integrations — all without exploiting a traditional software vulnerability.

Q: What compliance frameworks apply to LLM endpoint security? A: Organizations subject to GDPR must address the data exfiltration risks that exposed endpoints create, particularly when personal data flows through LLM pipelines. HIPAA-covered entities face similar obligations regarding protected health information accessible via AI tools. PCI DSS and SOC 2 both address access control and monitoring requirements that are directly applicable to NHI credential management and endpoint authentication.

Q: How often should NHI credentials be rotated in LLM environments? A: Security best practice recommends rotating NHI credentials at least every 90 days, with automated rotation preferred over manual processes to eliminate human delay. In high-risk environments — or following any suspected exposure event — immediate rotation is warranted regardless of the standard schedule.

Q: What is the first step an organization should take to reduce LLM endpoint risk? A: The most critical first step is achieving full visibility — mapping every LLM endpoint, every NHI credential, and every permission associated with your AI infrastructure. You cannot apply least-privilege, automate rotation, or detect anomalies against assets you do not know exist. A complete inventory is the foundation every subsequent control depends on.

Enjoyed this article?

Subscribe for more cybersecurity insights.