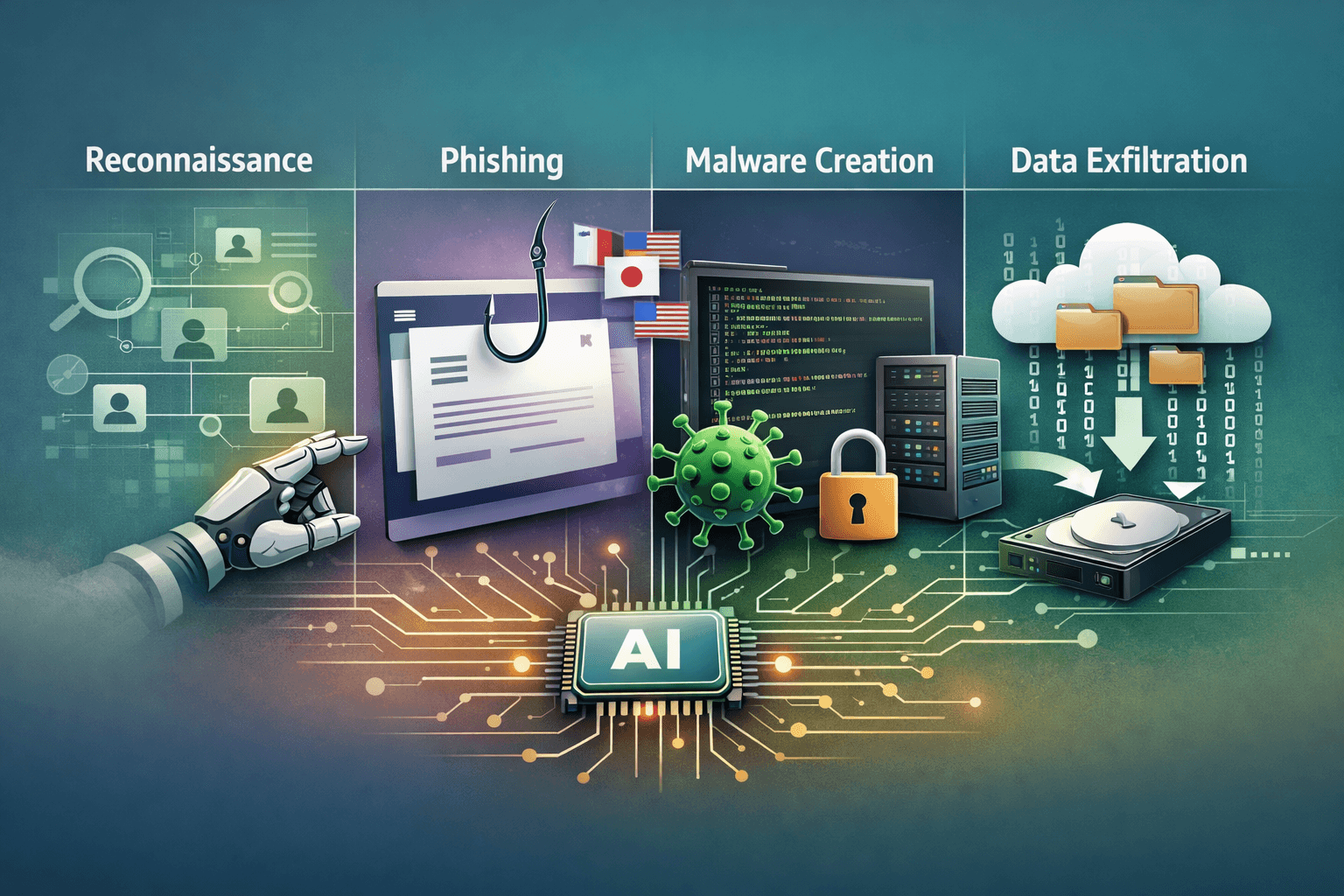

Generative AI has changed the math for attackers. According to Microsoft Threat Intelligence (2025), adversaries now deploy large language models (LLMs) at every phase of the attack lifecycle — from the first reconnaissance search to post-compromise data exfiltration. This is not a theoretical risk. It is an operational reality unfolding across enterprise networks today.

The implications are significant. Phishing emails are becoming harder to detect. Malware variants are iterating faster than signature databases can track. Infrastructure setup that once required technical expertise now takes hours instead of days. The barrier to entry for sophisticated attacks is collapsing.

This article breaks down exactly how threat actors weaponize generative AI, which attack stages are most affected, and what practical steps security teams can take to adapt their defenses. Whether you manage a SOC, lead an incident response team, or set security strategy, understanding this shift is no longer optional.

How Attackers Use AI Across the Full Attack Lifecycle

Threat actors have moved beyond experimentation. AI-assisted operations now span the entire kill chain, with different capabilities applied at each stage.

Reconnaissance and Target Research

Before any attack lands, adversaries need intelligence. AI dramatically accelerates this phase. Attackers use LLMs to scrape and summarize public data about targets — organizational charts, technology stacks, executive names, partner relationships, and financial disclosures.

What once required hours of manual research now takes minutes. A threat actor can prompt an LLM to synthesize a detailed target profile from open-source intelligence (OSINT), then use that profile to craft highly contextual lures.

Key reconnaissance tasks now assisted by AI:

- Summarizing LinkedIn data to map organizational hierarchies

- Identifying technology vendors from job postings and press releases

- Translating foreign-language business documents and filings

- Generating hypothesis-driven lists of likely attack surfaces

Phishing at Scale and in Every Language

Phishing remains the dominant initial access vector, and AI has made it dramatically more effective. Microsoft has observed threat actors using LLMs to draft convincing multilingual phishing emails, translate lures for regional campaigns, and adapt tone and register to match specific industries or personas.

The grammar errors and awkward phrasing that once helped users identify phishing attempts are disappearing. AI-generated lures now mimic internal communication styles with unsettling accuracy.

Table: AI-Enhanced Phishing vs. Traditional Phishing

| Characteristic | Traditional Phishing | AI-Enhanced Phishing |

|---|---|---|

| Language quality | Often poor grammar | Native-level fluency |

| Personalization | Generic or low-context | Role and name-specific |

| Language support | Single language typically | Multilingual in minutes |

| Production speed | Hours per campaign | Minutes per campaign |

| Detection difficulty | Moderate | High |

Pro Tip: User awareness training must evolve beyond "look for typos." Behavioral anomaly detection and sender verification through DMARC, DKIM, and SPF have become more critical than ever as linguistic quality ceases to be a reliable signal.

Malware Development and Infrastructure Deployment

AI is lowering the technical floor for malware creation and campaign infrastructure setup. Threat actors who previously lacked coding expertise can now generate functional malicious code with LLM assistance.

AI-Assisted Malware Generation

Microsoft Threat Intelligence has documented cases where attackers used LLMs to generate, debug, and refine malicious scripts. This includes everything from basic payload droppers to more sophisticated post-exploitation tools. When models refuse direct requests, attackers turn to jailbreaking — crafting prompts designed to bypass safety guardrails and coax restricted outputs.

Common malware development tasks where AI is now observed:

- Generating shellcode and payload delivery scripts

- Debugging obfuscated malware that evades static analysis

- Translating malware between programming languages for cross-platform use

- Producing polymorphic code variations to defeat signature detection

Infrastructure Spin-Up: The Coral Sleet Case

The threat group Coral Sleet demonstrated how AI accelerates campaign infrastructure deployment. Analysts observed the group using AI tools to rapidly spin up fake company websites, configure hosting environments, and troubleshoot deployment issues — all in support of social engineering operations targeting research institutions.

What previously required specialized infrastructure knowledge became a streamlined workflow. AI handled the configuration logic, troubleshooting, and content generation while the operators focused on targeting and delivery.

Table: Traditional vs. AI-Assisted Infrastructure Setup

| Task | Manual Effort (Hours) | AI-Assisted Effort (Hours) |

|---|---|---|

| Fake site creation | 8–16 | 1–3 |

| Hosting configuration | 4–8 | <1 |

| Content localization | 6–12 per language | <1 per language |

| Troubleshooting deployments | Variable | Significantly reduced |

| C2 scaffold setup | 10–20 | 2–5 |

Post-Compromise Operations and Data Exfiltration

Once inside a network, threat actors face a different problem: too much data and too little time to process it. AI solves this. Attackers now use LLMs to summarize stolen datasets, identify high-value documents, and prioritize exfiltration targets.

AI-Powered Data Triage

After compromising an endpoint or cloud environment, attackers can feed harvested documents into AI systems to extract actionable intelligence quickly. A stolen file share that would take days to manually review can be summarized in minutes. Contract terms, personnel records, intellectual property, and credential repositories all become rapidly searchable.

This accelerates the time between initial access and meaningful damage — compressing what defenders previously had as a response window.

Agentic AI: The Emerging Frontier

Microsoft's report flags early experiments with agentic AI as a significant forward-looking risk. Unlike standard LLM use where a human drives each prompt, agentic systems can autonomously chain tasks, adapt to intermediate results, and pursue objectives over extended workflows.

For attackers, this means future intrusion workflows could operate with far less human involvement. An agentic system could theoretically conduct reconnaissance, identify vulnerabilities, generate a payload, establish persistence, and begin exfiltration — adapting its approach at each step based on what it encounters.

Table: Current vs. Emerging AI Threat Capabilities

| Capability | Current State (2025) | Emerging Risk (Near-Term) |

|---|---|---|

| Phishing generation | Active and widespread | More targeted, harder to detect |

| Malware creation | Active, with jailbreaking | Faster iteration, cross-platform |

| Infrastructure automation | Active (e.g., Coral Sleet) | Fully automated deployment pipelines |

| Data triage post-compromise | Active | Real-time AI-driven exfiltration |

| Agentic attack workflows | Early experiments observed | Autonomous multi-stage intrusions |

What This Means for Defenders

The threat landscape is not just evolving — it is accelerating. Security teams need to recalibrate assumptions built around human-speed adversaries.

Detection Strategies Must Adapt

Signature-based and rule-based detection systems face new pressure. AI-generated phishing bypasses linguistic filters. AI-generated malware variants outpace signature updates. Defenders need to shift investment toward behavioral detection, anomaly-based alerting, and AI-assisted threat hunting.

Key defensive adaptations for modern security operations:

- Deploy email security with behavioral and contextual analysis, not just content filtering

- Increase emphasis on identity-based controls and zero-trust architecture

- Use AI-assisted SIEM (Security Information and Event Management) correlation to match adversary speed

- Prioritize threat intelligence feeds that specifically track AI-assisted TTPs (Tactics, Techniques, and Procedures) via MITRE ATT&CK

Security Frameworks Remain Essential

Established frameworks provide the structure defenders need to respond systematically. NIST's Cybersecurity Framework (CSF 2.0), CIS Controls v8, and ISO 27001 all offer guidance that applies directly to AI-augmented threats. The fundamentals — patching, least privilege, MFA, and logging — become more important, not less, as adversary capability increases.

Important: The MITRE ATT&CK framework is actively incorporating AI-assisted techniques into its knowledge base. Security teams should review updated TTPs quarterly and map detection coverage accordingly.

Key Takeaways

- Treat phishing as a zero-grammar-trust problem — AI eliminates linguistic red flags, so detection must shift to behavioral and contextual signals

- Accelerate your own detection cycles — adversaries use AI to iterate faster; your threat hunting and signature updates must keep pace

- Map your defenses to AI-augmented kill chain stages — reconnaissance, delivery, C2, and exfiltration all face new AI-driven pressure

- Monitor jailbreaking techniques actively — understanding how attackers bypass LLM guardrails informs both threat intelligence and vendor evaluation

- Plan for agentic threats now — autonomous AI attack workflows are experimental today but could become operational within your planning horizon

- Strengthen post-compromise detection — AI-accelerated data triage means your window between breach and exfiltration is shrinking

Conclusion

Generative AI has handed adversaries a capability multiplier that operates across the entire attack lifecycle. Phishing campaigns are more convincing, malware development is faster, infrastructure setup is cheaper, and post-compromise operations are more efficient. The threat groups already deploying these capabilities — like Coral Sleet — are not outliers. They are early indicators of where the broader adversary ecosystem is heading.

For security professionals, this demands a response grounded in the same principles that have always defined good security practice: defense in depth, behavioral detection, strong identity controls, and continuous adaptation. The tools and frameworks exist. The urgency to apply them has never been higher. Start by auditing your current detection coverage against AI-augmented attack patterns, and use frameworks like MITRE ATT&CK to identify the gaps.

Frequently Asked Questions

Q: Are all threat actors now using AI in their attacks?

A: Not universally, but adoption is broadening rapidly. Microsoft Threat Intelligence indicates that AI use is currently most prevalent in text, code, and media generation tasks. Nation-state actors and sophisticated criminal groups are leading adoption, but as tools become more accessible, lower-tier actors will follow.

Q: How can organizations detect AI-generated phishing emails?

A: Traditional content filters that flag grammar errors are becoming less reliable. Organizations should prioritize email authentication protocols (DMARC, DKIM, SPF), deploy solutions with behavioral and contextual analysis, and train users to verify sender identity through secondary channels rather than relying on message content alone.

Q: What is jailbreaking in the context of AI and cybersecurity?

A: Jailbreaking refers to prompt engineering techniques that attempt to bypass an AI model's built-in safety guardrails. Threat actors craft specific inputs designed to coax models into producing content they would otherwise refuse — such as malware code or manipulation scripts. This is an active area of adversarial research on both sides.

Q: What is agentic AI and why is it a security concern?

A: Agentic AI refers to systems that can autonomously pursue multi-step objectives without human prompting at each stage. Unlike standard LLM use, agentic systems can chain tasks, adapt to results, and operate over extended workflows. For attackers, this raises the prospect of intrusion sequences that require minimal human involvement — compressing attack timelines significantly.

Q: Which compliance frameworks address AI-assisted cyber threats?

A: No compliance framework currently addresses AI-assisted attacks specifically, but NIST CSF 2.0, ISO 27001, and CIS Controls v8 provide relevant structural guidance. NIST has also published its AI Risk Management Framework (AI RMF), which is increasingly referenced in enterprise security governance. Organizations in regulated industries should monitor GDPR, HIPAA, and PCI DSS guidance for AI-specific updates as regulators begin to respond.

Enjoyed this article?

Subscribe for more cybersecurity insights.