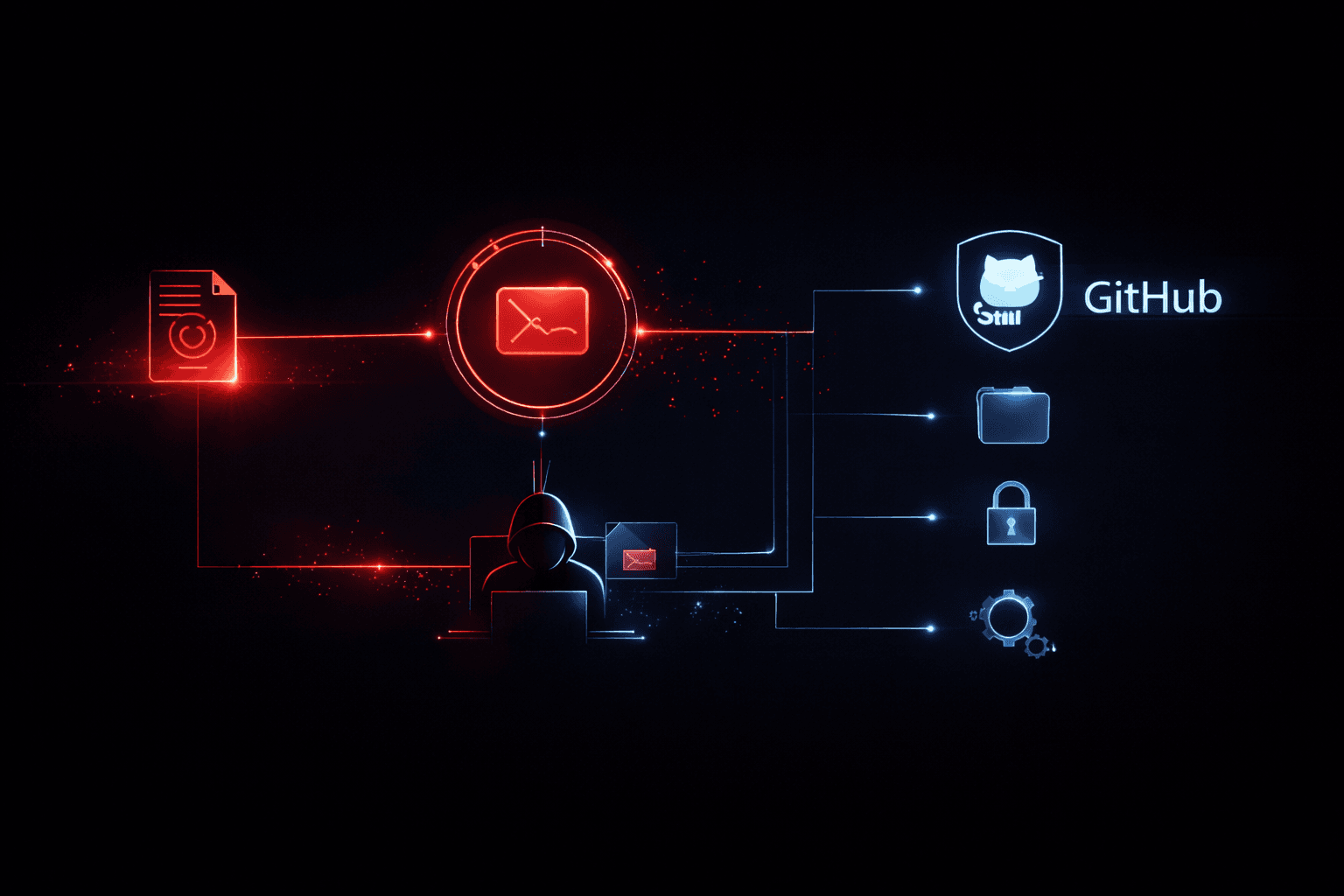

In early 2025, BeyondTrust Phantom Labs disclosed a vulnerability that should have shaken every organization relying on AI-assisted development tooling: a critical command injection flaw in OpenAI's Codex integration capable of stealing GitHub OAuth access tokens. This wasn't a theoretical proof-of-concept buried in an academic paper. It was a practical, exploitable attack path targeting the exact AI coding workflows that development teams have normalized over the past two years.

If your developers use Codex to work with GitHub repositories—and increasingly, most do—a single successful exploit could hand an attacker write access to private codebases, CI/CD pipelines, and every secret stored within them. This post breaks down how the attack works, why it succeeds, what the realistic blast radius looks like, and what security and DevSecOps teams need to implement now.

How the Command Injection Vulnerability Actually Works

The Unicode Branch Name Trick

The flaw exploits a deceptively simple mechanism: hidden Unicode characters embedded in Git branch names. Unicode includes a category of control characters—directional overrides, zero-width joiners, invisible formatting markers—that render invisibly in most interfaces. When an attacker names a branch using these characters, the branch name appears benign in GitHub's UI. To a developer reviewing a pull request, nothing looks unusual.

The attack works because Codex processes Git operations by constructing shell commands that include user-controlled input—in this case, branch names (MITRE ATT&CK T1059). When Codex executes a git command against a branch containing embedded Unicode control characters, the shell interprets the injected characters as command delimiters, causing the hidden payload to execute in the Codex runtime environment rather than being treated as a string argument.

Why the Environment Makes This Dangerous

This is where cause meets catastrophic impact. Codex doesn't execute in a hermetically sealed sandbox without credentials. It runs with access to the GitHub OAuth token that authenticated the session—a token that, in most developer environments, carries full repo scope, granting read and write access to every private repository the user can touch. The injected command simply reads the token from the environment and forwards it to an attacker-controlled HTTPS endpoint (T1048, T1552.001). The exfiltration takes milliseconds and leaves minimal trace in standard Git audit logs.

Important: Many organizations have not audited what scopes their Codex or AI coding assistant integrations request when authenticating with GitHub. An OAuth token with repo scope used in an AI context is functionally a master key to your codebase. Review granted scopes immediately.

The Supply Chain Blast Radius

A stolen GitHub OAuth token is not just a codebase problem. It is a supply chain problem. Consider what a token with full repo scope enables in practice.

| Access Gained | Realistic Attack Scenario | Downstream Impact |

|---|---|---|

| Private repo read access | Clone source code, harvest hardcoded secrets | IP theft, credential reuse across services |

| Push access to main branch | Inject malicious code into production branch | Compromised builds, backdoored releases |

| GitHub Actions secrets | Read CI/CD environment variables | AWS keys, Docker registry creds, signing keys exposed |

| Workflow file modification | Alter .github/workflows/ to add exfiltration step | Persistent supply chain access |

| Package publishing rights | Push poisoned versions to npm/PyPI | Downstream customer and partner compromise |

The 2024 GitHub Supply Chain Security Report documented a measurable increase in OAuth token abuse as the primary entry vector for CI/CD compromises—not credential stuffing, not phishing, but token theft from developer tooling integrations. Codex-style vulnerabilities represent exactly this attack surface.

Pro Tip: Treat GitHub OAuth tokens used by AI coding tools as equivalent to privileged service accounts. Apply the same controls: short token TTLs, fine-grained access tokens scoped to specific repositories, and alert rules on any token used outside of expected IP ranges or user-agents.

Detection: What Realistic SOC Coverage Looks Like

Most organizations have no detection logic covering this attack path because they haven't modeled AI coding tools as an attack surface. A developer using Codex looks like a developer using Codex—until the injected command fires.

| Detection Layer | Signal to Monitor | Framework Reference |

|---|---|---|

| GitHub audit logs | Token use from unexpected IPs or automation contexts | CIS Control 8.11, NIST DE.CM-3 |

| Network egress | Outbound HTTP/S from CI runner or dev workstation to unknown endpoints | CIS Control 13, NIST DE.CM-1 |

| Git branch hygiene | Branch names containing non-ASCII or Unicode control characters | NIST PR.DS-1 |

| OAuth token activity | Token usage volume spikes, access to repos beyond normal scope | ISO 27001 A.9.2.5 |

| CI/CD pipeline changes | Workflow file modifications not tied to a reviewed PR | CIS Control 4, NIST DE.AE-3 |

Detection at the branch-creation stage is most valuable—catching anomalous Unicode characters in branch names before Codex ever processes them. GitHub's API exposes branch metadata, and a simple pre-receive hook or webhook-triggered script can flag or reject branch names containing Unicode in non-printable ranges.

Incident Response Considerations

If you discover a GitHub token may have been exfiltrated via this vector, the response sequence matters. Under SOC 2 and NIST SP 800-61, the priority is containment before investigation: revoke the compromised token immediately, rotate all secrets accessible from any workflow it touched, and treat every repository it could read as potentially compromised. Do not wait for forensic confirmation before revoking—the cost of a false positive revocation is a developer inconvenience. The cost of delayed revocation is a poisoned production pipeline.

Mitigations That Actually Reduce Risk

Patching Codex or waiting for OpenAI to push a fix is necessary but insufficient as a standalone response. The underlying vulnerability class—AI tool command injection via unsanitized input—will recur in different integrations. Structural controls matter more than single-vendor patches.

| Control | Implementation | Risk Reduction |

|---|---|---|

| Fine-grained GitHub tokens | Scope tokens to specific repos, no wildcard repo access | Limits blast radius per integration |

| Branch name sanitization | Pre-receive hooks blocking Unicode control characters | Prevents injection trigger |

| Token TTL enforcement | Expire OAuth tokens after 8–24 hours, rotate on each session | Reduces value of stolen token |

| Network egress filtering | Block outbound from CI runners to non-allowlisted destinations | Breaks exfiltration step |

| AI tool sandboxing | Run Codex and similar tools in isolated environments without ambient credentials | Removes credential access from exploit path |

| SAST on workflow files | Automated review of any .github/workflows/ change | Catches post-compromise persistence |

NIST SP 800-218 (Secure Software Development Framework) explicitly calls out the need to validate all inputs processed by development tooling—a principle that applies directly to AI-assisted coding environments that consume repository metadata.

Key Takeaways

- Audit all GitHub OAuth token scopes granted to AI development tools and replace broad

repotokens with fine-grained, repository-scoped alternatives immediately. - Implement branch name validation as a pre-receive hook or webhook to block Unicode control characters—this disrupts the injection trigger before execution.

- Add GitHub audit log monitoring to your SIEM with alerts for token usage from anomalous source IPs, user-agents, or access patterns outside a developer's normal behavior baseline.

- Treat CI/CD environment secrets as compromised whenever a GitHub token with Actions access is revoked for suspected theft—rotate downstream credentials proactively.

- Model AI coding assistants as privileged integrations in your threat model, not as passive tools; they execute code in credentialed environments and must be assessed accordingly.

- Run tabletop exercises simulating a token exfiltration through an AI tool to validate that your detection, revocation, and secret-rotation playbooks work end-to-end under pressure.

Conclusion

The OpenAI Codex command injection vulnerability exposes a broader truth: as AI coding tools embed deeper into development workflows, they inherit the attack surface of everything they touch. A hidden Unicode character in a branch name was sufficient to traverse from an open-source repository to full private codebase access and CI/CD secret exposure. The flaw itself is patchable. The underlying pattern—AI tools consuming unsanitized input in credentialed environments—is not going away.

Security teams that haven't explicitly threat-modeled their AI development integrations are carrying unquantified risk right now. The practical next step is an OAuth token audit: pull every token your organization has granted to AI coding tools, review its scope against what the tool actually needs, and replace broad tokens with fine-grained alternatives. That single action closes the most dangerous part of this blast radius before a patch is even available.

Frequently Asked Questions

What is the Codex command injection vulnerability in plain terms?

OpenAI's Codex integration processed Git branch names without sanitizing hidden Unicode control characters. An attacker who controls a branch name—which is trivially achievable by submitting a pull request—could embed invisible shell commands in that name. When Codex executed git operations against the branch, the hidden commands ran in the Codex environment, which had access to the user's GitHub authentication token.

Could this affect developers who haven't installed anything special? Yes, if they use any interface that invokes Codex against GitHub repositories. The attack requires no special setup on the victim's end—only that Codex processes a branch name crafted by the attacker. A pull request from an external contributor is sufficient to introduce the malicious branch name.

How is this different from a standard XSS or injection attack? Architecturally similar, but the trusted context is different. Traditional command injection targets web servers or OS-level processes. This targets an AI coding assistant running with developer-level GitHub credentials—a trusted context where the injected payload immediately has access to sensitive tokens, not just system resources. The impact is scoped specifically to the software supply chain.

Does revoking the token actually help if the attacker already has it? Revoking stops ongoing abuse but does not undo access already exercised. If the token was used to read repositories or download secrets before revocation, that data is already compromised. Revocation combined with a full audit of what the token accessed—GitHub provides per-token audit logs—is the correct response. Assume any repository accessible to the token was cloned.

Do other AI coding tools have the same exposure? Potentially. Any AI coding assistant that constructs shell commands incorporating repository metadata (branch names, commit messages, tag names) without input sanitization carries the same vulnerability class. GitHub Copilot, Cursor, and similar tools that invoke Git operations should be evaluated against the same threat model. The safe assumption is that all such integrations should use fine-grained tokens with minimal scope until explicit security assessments confirm otherwise.

Enjoyed this article?

Subscribe for more cybersecurity insights.